Live Transcoder Enables Multi-Channel Synchronous Transport

The most unique, innovative, and distinct feature of the new Live Transcoder 1.13 is the Synchronous Multi-Channel/Multi-System Transport – an ability to transport camera feeds from a venue to a studio or cloud synchronized up to 1 frame.

Why we need multi-channel synchronous streaming

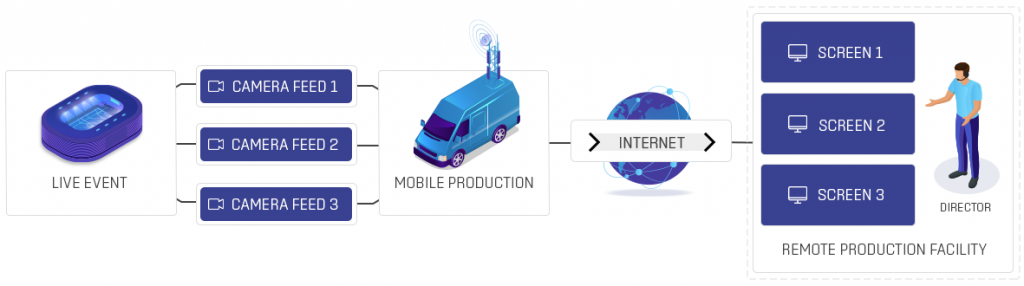

One of the many significant obstacles in remote live video production workflows is the synchronized transport of multiple video feeds. It means keeping the video feeds captured on multiple cameras at the venue synchronized on playback in the remote production facility. Synchronization is required at the precision of a single frame, and the transport shall add minimal latency.

The picture above depicts such a scenario. The video is captured from multiple angles using multiple cameras for a specific live event. The local video infrastructures are designed to keep live camera feeds in sync. The challenge is transporting the video from the venue to the remote production facility or the production cloud. Long-distance transportation often happens over managed or unmanaged IP networks such as the internet, where the delivery is typically the best effort. Keeping the individual camera feeds synchronized is therefore challenging but crucial for live production.

Mixing and cutting the multiple camera feeds wouldn’t be possible without synchronization. The switch from one camera to another would cause a jump in the timeline, and the viewer would miss a critical moment.

Synchronous video transport for remote production

Synchronizing video feeds for long-haul contributions is not a new concept. Professional broadcast productions have been dealing with synchronous transport for a long time. Typically they had complete control over the production environment, including the transportation, or they simply produced the video at the venue and streamed only the resulting video.

Live event production uses commodity technologies such as COTS servers, cloud, and standard internet connection. And more often, the events are produced remotely to save cost, reduce complexity, and enable professional live production for other than top-tier events.

Typically all camera feeds are encoded with one, or more often, with multiple video encoders, then the video feeds are transported over the internet or managed IP connection and received and decoded at the remote facility or in the cloud.

Bridge Live and Live Transcoder in SDI and NDI Workflows

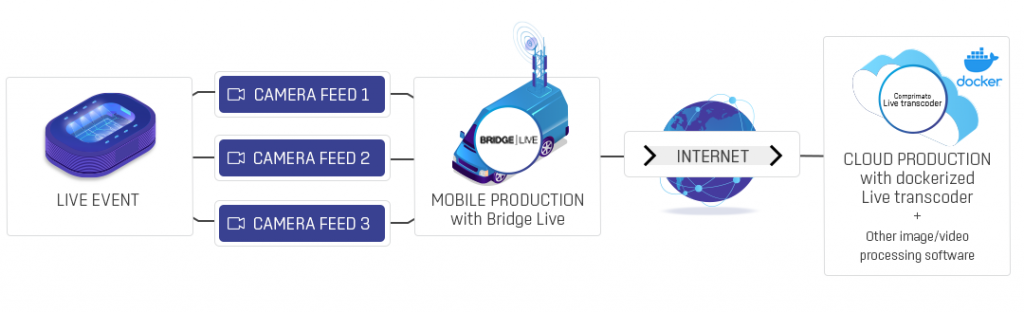

In such scenarios, Live Transcoder software is deployed at the venue and is responsible for encoding the camera IP video feeds. The cameras produce NDI or sometimes MPEG transport stream (TS). However, in most cases, the cameras are still SDI-based; therefore, an SDI encoder, such as Bridge Live, is needed.

Bridge live is a product we developed together with AJA. It`s called a “bridge” because you can use it to link SDI-based appliances with IP-based workflows and vice versa. Otherwise, it has the same features and working capacities as Live Transcoder.

Both Live Transcoder and Bridge Live determine the source clock frequency of the input video and embed the frequency information into the encoded feeds currently in transport. The video encoding is available in many formats, including H.264, HEVC, or even JPEG2000 TR01. A typical bitrate for H.264 transport is 10 to 40Mbps, and the JPEG2000 TR01 ranges between 100 and 250 Mbps for 1080p60 video. After it is encoded, the video gets transported using the SRT protocol over the internet connection, which can never be 100 % reliable. SRT makes sure the transmission is low latency and without packet loss.

All cameras need to operate on the same frequency for this to work. Gen-locking the cameras solves this issue.

On the receiving end, we use Live Transcoder or Bridge Live to decode the video feeds and recreate the clock frequency of the source video. The presentation timestamps and playback frequency govern the playback to SDI or even NDI. In the case of SDI, also the frequency of the local SDI infrastructure matters.

The source video feeds are encoded and transmitted over the internet without dropping a frame. The feeds are decoded and played synchronously on the receiving end, a remote production facility, or a cloud instance. The complete workflow outline is in the following picture.

Cloud and hybrid workflows

More and more live video production is moving from traditional physical facilities to cloud workflows. A combination of physical Bridge Live appliances at the venue and cloud instances running dockerized Live Transcoder software gives a possibility to transport the content synchronously to the, e.g., AWS cloud and process further by software such as vMix.

Sometimes the challenge with cloud workflows is the requirement to contribute the video feeds to the cloud using the NDI protocol. Each NDI feed takes several hundred megabits of bandwidth, which is not always practical or even possible. In such cases, Live Transcoder works as a cloud video gateway that synchronizes the input video feeds and can receive a low bitrate H.264 and convert it to NDI with very low latency.

Besides a simple transport use case, Live Transcoder provides a great feature set for other modes of usage like ABR transcoding, standards conversion (framerate conversion – will be available in autumn 2022), basic video processings, and filters, or HLS packaging.

Having the same software installed on the BrigeLive SDI encoder at the venue, on the standard COTS HW unit at the production facility, and on the cloud instance is an excellent benefit for real-world usage where tasks such as operator training, API integration, and so on play a significant role.

Availability and pricing

Get in touch with us, become our customer and get a price plan according to your needs. Live Transcoder 1.13 and all future versions releases are available to our current clients with active maintenance. Customers without a maintenance agreement can access bug fixes within new versions but will not receive newly added feature access.